Modeling relationships to solve complex problems efficiently

Associate Professor Julian Shun develops high-performance algorithms and frameworks for large-scale graph processing.

The German philosopher Fredrich Nietzsche once said that “invisible threads are the strongest ties.” One could think of “invisible threads” as tying together related objects, like the homes on a delivery driver’s route, or more nebulous entities, such as transactions in a financial network or users in a social network.

Computer scientist Julian Shun studies these types of multifaceted but often invisible connections using graphs, where objects are represented as points, or vertices, and relationships between them are modeled by line segments, or edges.

Shun, a newly tenured associate professor in the Department of Electrical Engineering and Computer Science, designs graph algorithms that could be used to find the shortest path between homes on the delivery driver’s route or detect fraudulent transactions made by malicious actors in a financial network.

But with the increasing volume of data, such networks have grown to include billions or even trillions of objects and connections. To find efficient solutions, Shun builds high-performance algorithms that leverage parallel computing to rapidly analyze even the most enormous graphs. As parallel programming is notoriously difficult, he also develops user-friendly programming frameworks that make it easier for others to write efficient graph algorithms of their own.

“If you are searching for something in a search engine or social network, you want to get your results very quickly. If you are trying to identify fraudulent financial transactions at a bank, you want to do so in real-time to minimize damages. Parallel algorithms can speed things up by using more computing resources,” explains Shun, who is also a principal investigator in the Computer Science and Artificial Intelligence Laboratory (CSAIL).

Such algorithms are frequently used in online recommendation systems. Search for a product on an e-commerce website and odds are you’ll quickly see a list of related items you could also add to your cart. That list is generated with the help of graph algorithms that leverage parallelism to rapidly find related items across a massive network of users and available products.

Campus connections

As a teenager, Shun’s only experience with computers was a high school class on building websites. More interested in math and the natural sciences than technology, he intended to major in one of those subjects when he enrolled as an undergraduate at the University of California at Berkeley.

But during his first year, a friend recommended he take an introduction to computer science class. While he wasn’t sure what to expect, he decided to sign up.

“I fell in love with programming and designing algorithms. I switched to computer science and never looked back,” he recalls.

That initial computer science course was self-paced, so Shun taught himself most of the material. He enjoyed the logical aspects of developing algorithms and the short feedback loop of computer science problems. Shun could input his solutions into the computer and immediately see whether he was right or wrong. And the errors in the wrong solutions would guide him toward the right answer.

“I’ve always thought that it was fun to build things, and in programming, you are building solutions that do something useful. That appealed to me,” he adds.

After graduation, Shun spent some time in industry but soon realized he wanted to pursue an academic career. At a university, he knew he would have the freedom to study problems that interested him.

Getting into graphs

He enrolled as a graduate student at Carnegie Mellon University, where he focused his research on applied algorithms and parallel computing.

As an undergraduate, Shun had taken theoretical algorithms classes and practical programming courses, but the two worlds didn’t connect. He wanted to conduct research that combined theory and application. Parallel algorithms were the perfect fit.

“In parallel computing, you have to care about practical applications. The goal of parallel computing is to speed things up in real life, so if your algorithms aren’t fast in practice, then they aren’t that useful,” he says.

At Carnegie Mellon, he was introduced to graph datasets, where objects in a network are modeled as vertices connected by edges. He felt drawn to the many applications of these types of datasets, and the challenging problem of developing efficient algorithms to handle them.

After completing a postdoctoral fellowship at Berkeley, Shun sought a faculty position and decided to join MIT. He had been collaborating with several MIT faculty members on parallel computing research, and was excited to join an institute with such a breadth of expertise.

In one of his first projects after joining MIT, Shun joined forces with Department of Electrical Engineering and Computer Science professor and fellow CSAIL member Saman Amarasinghe, an expert on programming languages and compilers, to develop a programming framework for graph processing known as GraphIt. The easy-to-use framework, which generates efficient code from high-level specifications, performed about five times faster than the next best approach.

“That was a very fruitful collaboration. I couldn’t have created a solution that powerful if I had worked by myself,” he says.

Shun also expanded his research focus to include clustering algorithms, which seek to group related datapoints together. He and his students build parallel algorithms and frameworks for quickly solving complex clustering problems, which can be used for applications like anomaly detection and community detection.

Dynamic problems

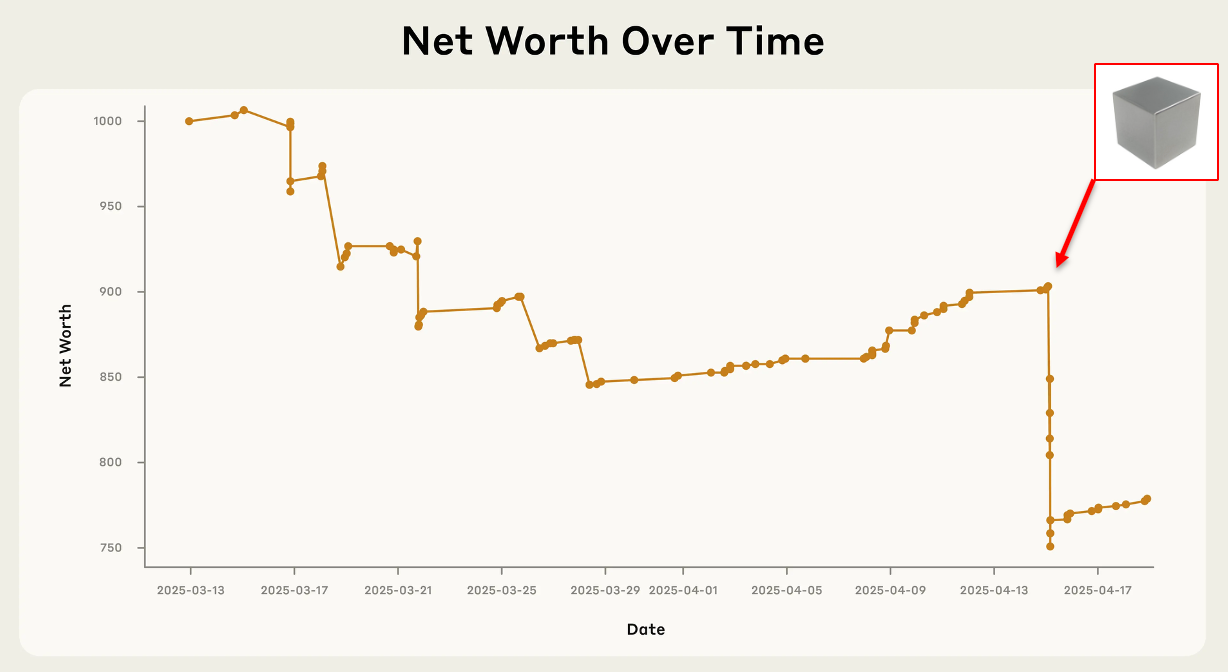

Recently, he and his collaborators have been focusing on dynamic problems where data in a graph network change over time.

When a dataset has billions or trillions of data points, running an algorithm from scratch to make one small change could be extremely expensive from a computational point of view. He and his students design parallel algorithms that process many updates at the same time, improving efficiency while preserving accuracy.

But these dynamic problems also pose one of the biggest challenges Shun and his team must work to overcome. Because there aren’t many dynamic datasets available for testing algorithms, the team often must generate synthetic data which may not be realistic and could hamper the performance of their algorithms in the real world.

In the end, his goal is to develop dynamic graph algorithms that perform efficiently in practice while also holding up to theoretical guarantees. That ensures they will be applicable across a broad range of settings, he says.

Shun expects dynamic parallel algorithms to have an even greater research focus in the future. As datasets continue to become larger, more complex, and more rapidly changing, researchers will need to build more efficient algorithms to keep up.

He also expects new challenges to come from advancements in computing technology, since researchers will need to design new algorithms to leverage the properties of novel hardware.

“That’s the beauty of research — I get to try and solve problems other people haven’t solved before and contribute something useful to society,” he says.