AI method radically speeds predictions of materials’ thermal properties

The approach could help engineers design more efficient energy-conversion systems and faster microelectronic devices, reducing waste heat.

It is estimated that about 70 percent of the energy generated worldwide ends up as waste heat.

If scientists could better predict how heat moves through semiconductors and insulators, they could design more efficient power generation systems. However, the thermal properties of materials can be exceedingly difficult to model.

The trouble comes from phonons, which are subatomic particles that carry heat. Some of a material’s thermal properties depend on a measurement called the phonon dispersion relation, which can be incredibly hard to obtain, let alone utilize in the design of a system.

A team of researchers from MIT and elsewhere tackled this challenge by rethinking the problem from the ground up. The result of their work is a new machine-learning framework that can predict phonon dispersion relations up to 1,000 times faster than other AI-based techniques, with comparable or even better accuracy. Compared to more traditional, non-AI-based approaches, it could be 1 million times faster.

This method could help engineers design energy generation systems that produce more power, more efficiently. It could also be used to develop more efficient microelectronics, since managing heat remains a major bottleneck to speeding up electronics.

“Phonons are the culprit for the thermal loss, yet obtaining their properties is notoriously challenging, either computationally or experimentally,” says Mingda Li, associate professor of nuclear science and engineering and senior author of a paper on this technique.

Li is joined on the paper by co-lead authors Ryotaro Okabe, a chemistry graduate student; and Abhijatmedhi Chotrattanapituk, an electrical engineering and computer science graduate student; Tommi Jaakkola, the Thomas Siebel Professor of Electrical Engineering and Computer Science at MIT; as well as others at MIT, Argonne National Laboratory, Harvard University, the University of South Carolina, Emory University, the University of California at Santa Barbara, and Oak Ridge National Laboratory. The research appears in Nature Computational Science.

Predicting phonons

Heat-carrying phonons are tricky to predict because they have an extremely wide frequency range, and the particles interact and travel at different speeds.

A material’s phonon dispersion relation is the relationship between energy and momentum of phonons in its crystal structure. For years, researchers have tried to predict phonon dispersion relations using machine learning, but there are so many high-precision calculations involved that models get bogged down.

“If you have 100 CPUs and a few weeks, you could probably calculate the phonon dispersion relation for one material. The whole community really wants a more efficient way to do this,” says Okabe.

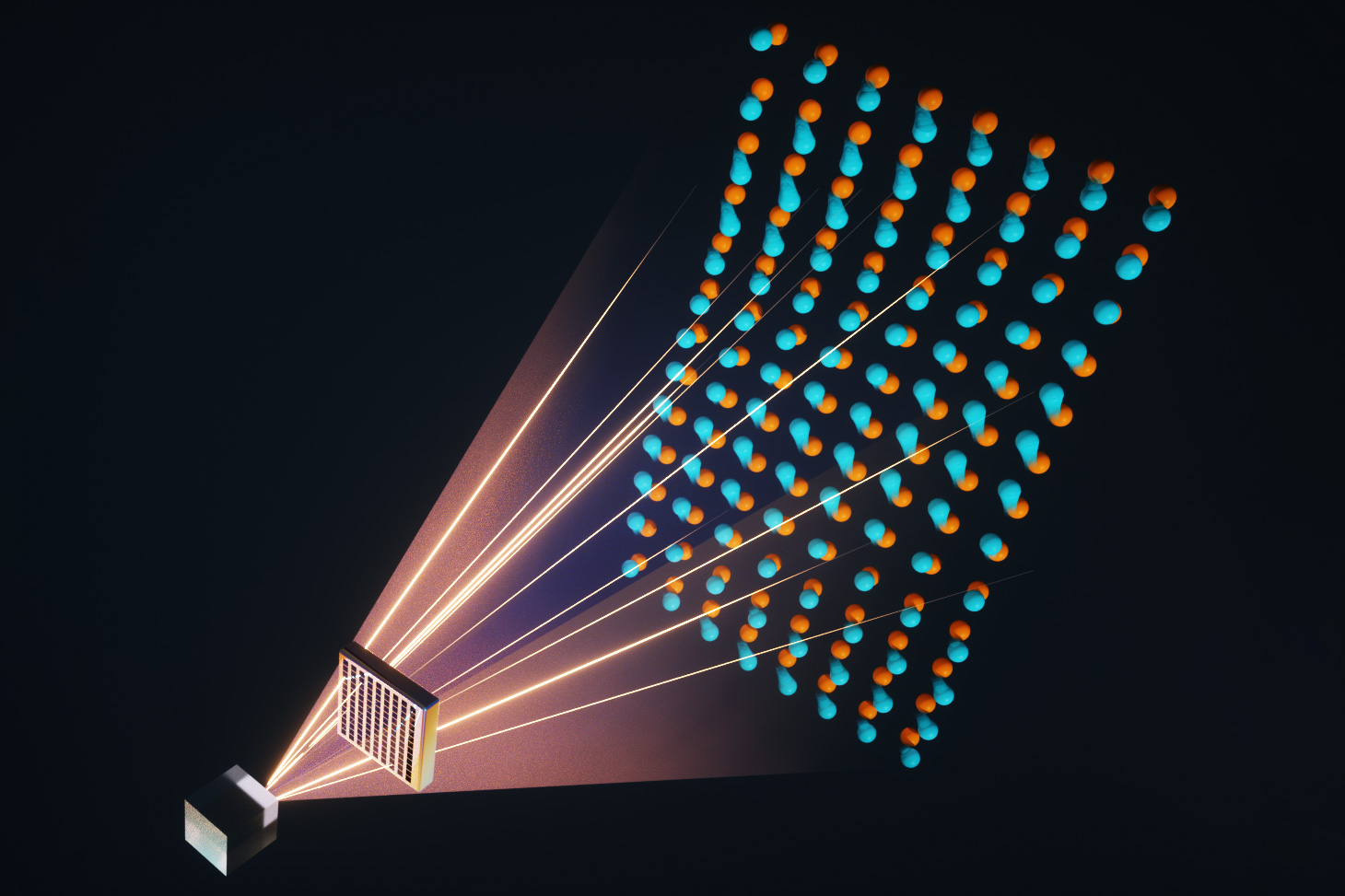

The machine-learning models scientists often use for these calculations are known as graph neural networks (GNN). A GNN converts a material’s atomic structure into a crystal graph comprising multiple nodes, which represent atoms, connected by edges, which represent the interatomic bonding between atoms.

While GNNs work well for calculating many quantities, like magnetization or electrical polarization, they are not flexible enough to efficiently predict an extremely high-dimensional quantity like the phonon dispersion relation. Because phonons can travel around atoms on X, Y, and Z axes, their momentum space is hard to model with a fixed graph structure.

To gain the flexibility they needed, Li and his collaborators devised virtual nodes.

They create what they call a virtual node graph neural network (VGNN) by adding a series of flexible virtual nodes to the fixed crystal structure to represent phonons. The virtual nodes enable the output of the neural network to vary in size, so it is not restricted by the fixed crystal structure.

Virtual nodes are connected to the graph in such a way that they can only receive messages from real nodes. While virtual nodes will be updated as the model updates real nodes during computation, they do not affect the accuracy of the model.

“The way we do this is very efficient in coding. You just generate a few more nodes in your GNN. The physical location doesn’t matter, and the real nodes don’t even know the virtual nodes are there,” says Chotrattanapituk.

Cutting out complexity

Since it has virtual nodes to represent phonons, the VGNN can skip many complex calculations when estimating phonon dispersion relations, which makes the method more efficient than a standard GNN.

The researchers proposed three different versions of VGNNs with increasing complexity. Each can be used to predict phonons directly from a material’s atomic coordinates.

Because their approach has the flexibility to rapidly model high-dimensional properties, they can use it to estimate phonon dispersion relations in alloy systems. These complex combinations of metals and nonmetals are especially challenging for traditional approaches to model.

The researchers also found that VGNNs offered slightly greater accuracy when predicting a material’s heat capacity. In some instances, prediction errors were two orders of magnitude lower with their technique.

A VGNN could be used to calculate phonon dispersion relations for a few thousand materials in just a few seconds with a personal computer, Li says.

This efficiency could enable scientists to search a larger space when seeking materials with certain thermal properties, such as superior thermal storage, energy conversion, or superconductivity.

Moreover, the virtual node technique is not exclusive to phonons, and could also be used to predict challenging optical and magnetic properties.

In the future, the researchers want to refine the technique so virtual nodes have greater sensitivity to capture small changes that can affect phonon structure.

“Researchers got too comfortable using graph nodes to represent atoms, but we can rethink that. Graph nodes can be anything. And virtual nodes are a very generic approach you could use to predict a lot of high-dimensional quantities,” Li says.

“The authors’ innovative approach significantly augments the graph neural network description of solids by incorporating key physics-informed elements through virtual nodes, for instance, informing wave-vector dependent band-structures and dynamical matrices,” says Olivier Delaire, associate professor in the Thomas Lord Department of Mechanical Engineering and Materials Science at Duke University, who was not involved with this work. “I find that the level of acceleration in predicting complex phonon properties is amazing, several orders of magnitude faster than a state-of-the-art universal machine-learning interatomic potential. Impressively, the advanced neural net captures fine features and obeys physical rules. There is great potential to expand the model to describe other important material properties: Electronic, optical, and magnetic spectra and band structures come to mind.”

This work is supported by the U.S. Department of Energy, National Science Foundation, a Mathworks Fellowship, a Sow-Hsin Chen Fellowship, the Harvard Quantum Initiative, and the Oak Ridge National Laboratory.